Table of Contents

CIGAL Overview

CIGAL is a comprehensive high-level interactive programming environment. For real-time behavioral control in fMRI, a CIGAL application program named ShowPlay was created for stimulus presentation and response recording. This ShowPlay application was designed as a general-purpose fMRI interface to accommodate a wide variety of behavioral paradigm designs. Because CIGAL is typically configured to load the ShowPlay graphical user interface (GUI) automatically, CIGAL appears to the user to be a dedicated fMRI behavioral control program. ShowPlay itself is transparent and there is no need for most users to learn anything at all about CIGAL’s programming language. For more advanced users or for particularly complicated paradigms, the modular ShowPlay application interface can be easily customized to meet specific needs using CIGAL’s extensive programming capabilities (see ‘Modularity’ below).

For more information on the CIGAL programming language, see CIGAL Language. Additional general information is available in the Preface and Introduction sections of CIGAL's on-line manual.

The rest of this page focusses on CIGAL features specifically designed for fMRI.

CIGAL's real-time multi-tasking processor

The unique feature that makes CIGAL particularly well suited for sophisticated fMRI behavioral control is its real-time processor command. This feature was added specifically for fMRI (Voyvodic, 1999) in order to provide accurate timing of stimulus and response events. The processor uses the computer’s standard real-time clock to keep time with 20 microsecond accuracy. Almost all CIGAL real-time operators execute in 60 microseconds or less (Voyvodic, 1999), although a few I/O operators can take up to 20 milliseconds (e.g. 16 ms to redraw an entire 1024×768 3MB display). In a single real-time command list, therefore, the execution onset of any particular command can be controlled with sub-millisecond accuracy, and the speed with which multiple commands can be executed in sequence is limited only by the computer’s CPU and I/O speeds.

CIGAL’s real-time processor was designed to be multi-tasking so that it can run any number of real-time object modules simultaneously. Within a single real-time program, blocks of code can be specified to run synchronously or asynchronously, and the compiler creates separate object modules for each asynchronous block. During execution, the real-time scheduler monitors all active modules to automatically determine which operation is next to run. Timing conflicts are resolved via a priority hierarchy. The result is that a standard PC with a single CPU can run a large number of asynchronous program modules simultaneously in parallel, with no programming effort necessary to interleave individual tasks. Almost all operations with specified real-time execution onsets actually execute within approximately 100 microseconds of their scheduled times (Voyvodic 1999). Occasionally a scheduled event may be delayed for a few milliseconds if it conflicts with a slow higher priority event. Such conflicts are rarely significant in fMRI, but when they are they can be explicitly avoided simply by specifying high or low priority flags for critical operations. During execution, the actual onset time of all important operations is time-stamped and logged with 20 microsecond precision, so regardless of intended execution speed, users receive a real-time record of the true timing of every event that occurs.

Stimulus presentation

ShowPlay takes as input a simple text file specifying a list of basic parameter settings (e.g. background screen color, initial delay times) and a list of stimulus events (Sample input file). Stimulus events can be static images, animated movies (.AVI files), sound files, text stimuli, video graphics, or commands that communicate with external hardware devices (e.g. via parallel or serial communication ports). The event list can also include commands that wait for subject responses or other external events and then adjust the timing of subsequent events depending on the wait response. Each stimulus event specifies exactly when it should occur (with millisecond accuracy), when it should end, and if it is a video event, where it should appear on the screen. Multiple video stimuli can be presented simultaneously with auditory stimuli. Each stimulus event specification also allows flags to code special handling options and to allow for user-defined categorization of each stimulus. These flags can also indicate which specific stimulus events expect subject responses, so that stimulus/response pairs can be matched in the output data. Before execution, ShowPlay sorts the event list in chronological order and preloads stimuli if requested.

Response recording

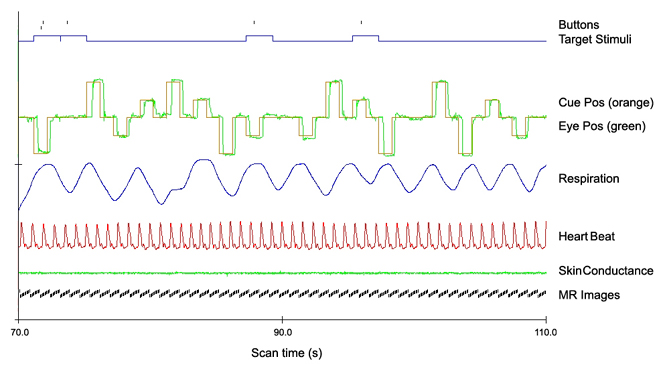

Whereas task-specific stimuli need to be explicitly specified for each behavioral paradigm, recording of responses usually just involves specifying what type of response devices to record and how often. ShowPlay sets up a separate asynchronous real-time program module for each type of input device, all of which run in parallel to ensure that any input from any device is recorded with millisecond accuracy. A typical CIGAL fMRI paradigm, therefore, records response events arising from keyboard, mouse, button box, or joystick. At Duke we also routinely record analog signals via an A/D device (National Instruments Inc.) to provide a continuous record of MRI scanner pulses, as well as cardiac and respiratory oscillations. For paradigms that produce emotional responses we can also routinely monitor galvanic skin conductance via an A/D input. CIGAL can also read and write parallel and serial I/O ports in real-time. We have recently added eye-tracker monitoring to CIGAL by connecting a serial port to receive real-time streaming eye position X/Y data from a second computer running eye-tracker image processing software (Arrington Research, Inc.). An important practical advantage in having CIGAL present stimuli and simultaneously record all of the different response measurements is that the timing of all events are automatically synchronized (See Figure). If they wish, users also have the option of collecting data on multiple separate computers using CIGAL to send triggering signals synchronized to predetermined real-time task events.

Figure: Example of multi-channel time course data recorded by CIGAL during a fMRI scan. The paradigm was a visually guided saccade task with central fixation and saccades to and from eccentric targets (1 Hz). The bottom black ticks indicate the time of each MR image acquisition (14 interleaved slices), the lower green trace is skin conductance sampled by A/D at 100 Hz. Heart beat (red) and respiration (blue) were recorded from scanner transducers via streaming serial port input (80 Hz). The horizontal displacement of saccade targets is shown in orange with a green trace superimposed of horizontal eye position recorded via streaming serial port input from a separate eye-tracking computer (30 Hz). The upper blue curve shows the timing of targets expecting button presses, and the black tick marks above the curve shows the identity and timing of the button responses. CIGAL can routinely record and display behavioral and physiological data like this for any paradigm with no programming required by the user.

Programmable graphical user interface (GUI)

Although CIGAL provides extensive programming capabilities, most users only interact with the software through customized interactive GUI’s. When using CIGAL for fMRI the ShowPlay interface automatically loads a set of task bars, pull-down menus, dialog menus, and alert windows that provide all the necessary tools for running behavioral paradigms. CIGAL itself contains no predefined menus or dialogs. Instead, all menus or dialogs are specified by simple text files and multiple menus can be loaded and used without any preprocessing. The ShowPlay GUI, therefore, is simply a collection of text files that together create an intuitive user interface for fMRI. Users can easily modify the interface or add new features simply by editing the GUI text files. The menu programming features also make it easy for individual users to organize their own paradigm files into simple-to-run protocol menus. By creating such menus the operator at the scanner can systematically run an entire fMRI protocol simply by stepping through the menu items. In general, any CIGAL command or program can be associated with any GUI widget, which allows enormous flexibility for customizing point-and-click GUI tools to meet specific needs.

Modularity

For users who are interested in programming specialized features in CIGAL behavioral paradigms, the ShowPlay program was intentionally designed to be easy to modify. This was done by coding each different aspect of behavioral control and monitoring in separate program module text files. For example, one module is responsible for stepping through the event list and presenting stimuli, other modules include synchronization with the MRI scanner, recording button box responses, recording joystick responses, recording eye-tracking, and recording analog cardiac and respiratory oscillations. At run-time, these text file modules are concatenated to create the full real-time program, which is then compiled and run. Most behavioral paradigms simply use the default task modules, but users are also free to modify, add, or omit individual modules to create customized paradigms. For example, a particular paradigm may want to have joystick motion dynamically control some aspect of the video display. ShowPlay’s modular design allows users to program this simply by modifying the joystick-reading module to modify the video display. When necessary, separate modules can be programmed to interact with each other by means of common data variables. In most cases, however, the timing of any individual module can be changed without affecting other modules because the multi-tasking real-time processor looks after interleaving all the modular code at run-time to obtain correct timing.

Real-time behavioral monitoring

We have found that it is not enough to simply create behavioral stimuli and tell subjects what to do during an fMRI scan. It is also a good idea to monitor what the subjects actually do, in order to optimize the probability of obtaining data that will be suitable for analysis. To facilitate this, CIGAL provides tools for monitoring the subject’s behavior in real-time so that problems can be caught and corrected as soon as possible. At present, user’s can specify control options that will generate either video or auditory signals whenever the subject responds to a stimulus, and they can also add codes that will identify whether individual responses were correct or incorrect. One of the improvements currently underway will allow CIGAL to use a second display monitor to provide more complete real-time monitoring of any response channel, including physiological data if desired. This enhanced quality control capability is likely to result in significant improvements in overall data quality in fMRI task performance.

Interoperability with image analysis software

Since data collection is not very useful without subsequent analysis, we have tried to make CIGAL directly compatible with fMRI image analysis software. CIGAL output files have always been directly readable by our own in-house image analysis programs and we have recently begun to focus on the issue of software interoperability more generally. As such, CIGAL can now be configured to automatically generate output files describing stimulus timing in the format used by the FSL analysis package (Smith et al., 2004). It can also generate more detailed stimulus-response timing output files in the XML format being developed by the FBIRN project (Gadde et al., 2004). Other formats could also be added with very little effort.

References

Gadde S., C. Michelich, J Voyvodic (2004) An XML-based Data Access Interface for Image Analysis and Visualization Software. Proceedings of Human Brain Mapping 2004. Budapest.

Smith S.M., M. Jenkinson, M.W. Woolrich, C.F. Beckmann, T.E.J. Behrens, H. Johansen-Berg, P.R. Bannister, M. De Luca, I. Drobnjak, D. Flitney, R. Niazy, J. Saunders, J. Vickers, Y. Zhang, N. De Stefano, J.M. Brady, P.M. Matthews (2004). Advances in functional and structural MR image analysis and implementation as FSL, NeuroImage. 23:S208-219.

Voyvodic J.T. (1999). Real-time fMRI paradigm control, physiology, and behavior combined with near real-time statistical analysis. Neuroimage 10: 91-106. Download